The Accelerating Gap Between Attack and Defense in the Age of AI

Read this post in [Korean].

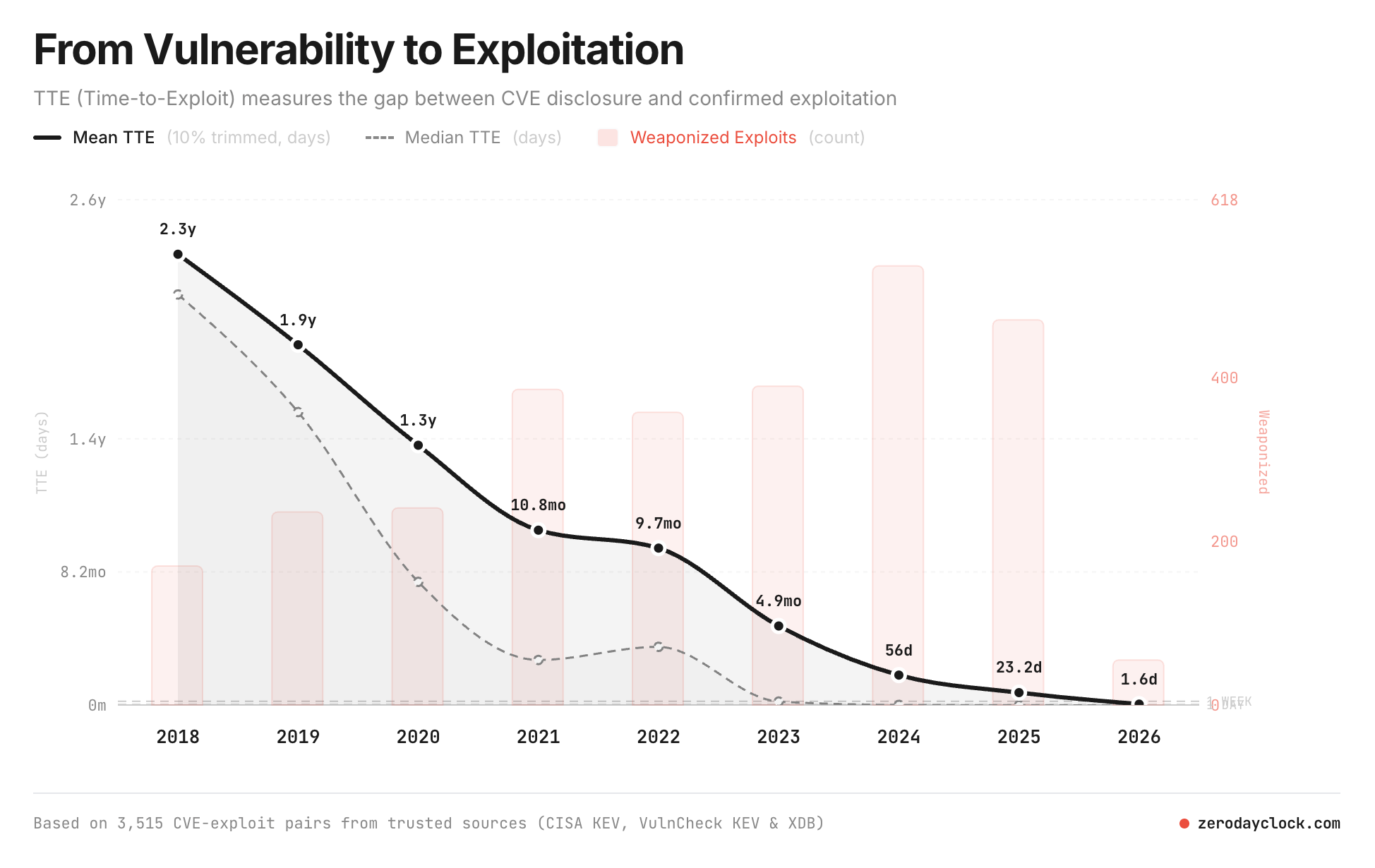

The time from vulnerability disclosure to working exploit code has reportedly shrunk from 2.3 years to 1.6 days.

Source: zerodayclock.com

Source: zerodayclock.com

Tasks like AI-powered vulnerability analysis, exploit writing, and large-scale scanning are getting faster every day. Setting aside whether AI is smarter than humans, in terms of speed, it already seems beyond what humans can keep up with.

So can defense keep up with this speed?

Even when a patch exists, there is always a physical time gap, a “patch gap,” before it is actually deployed to systems. From what I heard during previous research, even after patching a smartphone vulnerability, it can take at least 6 months to reach end-user devices through hundreds of carriers worldwide.

These gaps are gradually shrinking, but can patching ever keep pace with the speed of attacks? On top of that, users do not always update immediately. For these reasons, attacks exploiting the patch gap could actually grow.

AI has already reached the stage of finding vulnerabilities at scale. AI security startup AISLE found 12 CVEs in OpenSSL, and model companies like Anthropic and OpenAI Aardvark are also conducting AI-based vulnerability analysis.

If LLMs can find vulnerabilities with high accuracy and even suggest patches, and if this can be adopted in the development stage, where does the role of the pentester shift to?

What should vulnerability assessors focus on going forward?

Before, the question was: “Does this code have a vulnerability?” Now, the questions are a bit different. “What attack paths can this vulnerability actually create?” “Even if this system is compromised, how far can the impact reach?”

Is it realistic to completely eliminate vulnerabilities? Instead, designing structures that limit the damage scope even when vulnerabilities exist, i.e., reducing the blast radius, may become the more important problem. Google’s recent acquisition of Wiz, a company specializing in Attack Path Analysis and Simulation, seems related to this trend. Analyzing what attack paths a vulnerability can actually create is becoming more important than just finding the vulnerability itself.

Simply finding vulnerabilities in given code may not be sufficient on its own. Understanding the context of the entire system across multiple domains (operational environment configurations, permission structures, interconnections with other systems, actual service flows) may become the more critical problem.

There are some interesting attempts as well. Meta has shown an approach of not viewing code simply as tokens or words, but modeling the flow of code execution and state changes with their Code World Model.

This is still experimental research, but if such approaches are applied to the security domain, it may become possible to go beyond simply finding code vulnerabilities to understanding how and in what context a system can fail.

Flexible response capabilities, the ability to shift thinking quickly, will become increasingly important going forward.